Make your SSR site Lightning Fast with Redis Cache

📣 Sponsor

Redis is an in memory store which is primarily used as a database. You may have heard of Redis and heard how cool it is, but never had a real use case for it. In this tutorial, I'll be showing you how you can leverage Redis to speed up your Server Side Rendered (SSR) web application. If you're new to Redis, check out our guides on Installing Redis and creating key value pairs to get a better idea of how it works.

In this article we'll be looking at how to adjust your Node.JS Express application to build in lightning fast caching with Redis.

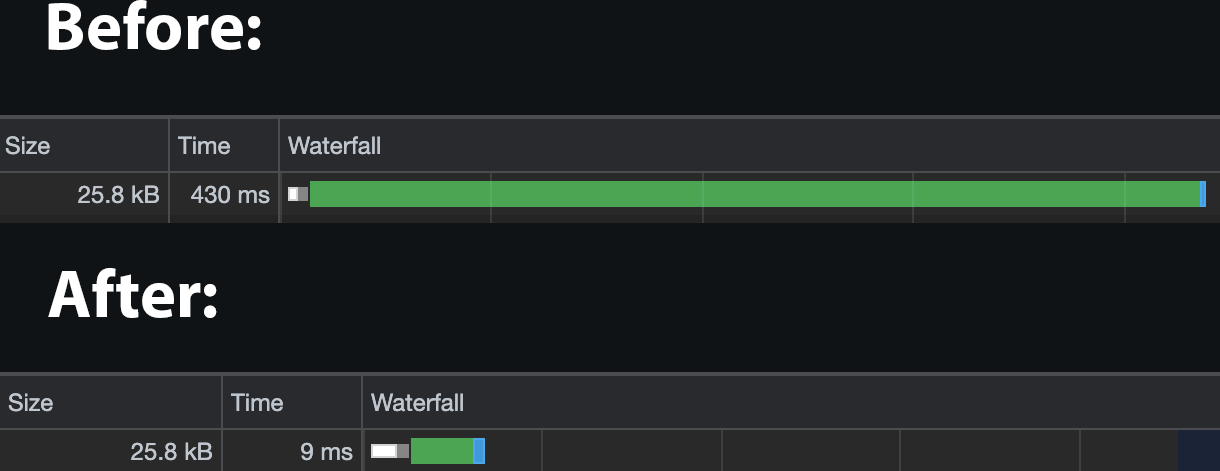

The outcome of this was quite dramatic. I was able to speed up page load time by on average 95%:

Background to the Problem

On Fjolt I use Express to render webpages on the server, and send them to the user. As time has gone on, and I've added more features, the server rendering has increased in complexity - for instance, I recently added line numbers to the code examples, and this required quite a bit of extra processing on the server, relatively speaking. The server is still very fast, but as more complexity is added, there is a chance that the server calculations will take longer and longer.

Ultimately that means a slower page load time for users, and especially for those on slow 3G connections. I have had this on my radar for a while, as not only do I want everyone to enjoy reading content fast, but also because page speed has major SEO implications.

When a page loads on Fjolt, I run an Express route like this:

import express from 'express';

const articleRouter = express.Router();

// Get Singular Article

articleRouter.get(['/article/:articleName/', async function(req, res, next) {

// Process article

// A variable to store all of our processed HTML

let finalHtml = '';

// A lot more Javascript goes here

// ...

// Finally, send the Html file to the user

res.send(finalHtml);

});

Every time anyone loads a page, the article is processed from the ground up - and everything is processed at once. That means a few different database calls, a few fs.readFile functions, and some quite computationally complex DOM manipulations to create the code linting. It's not worryingly complicated, but it also means the server is doing a lot of work all the time to process multiple users on multiple pages.

In any case, as things scale, this will become a problem of increasing size. Luckily, we can use Redis to cache pages, and display them to the user immediately.

Why Use Redis to Cache Web Pages

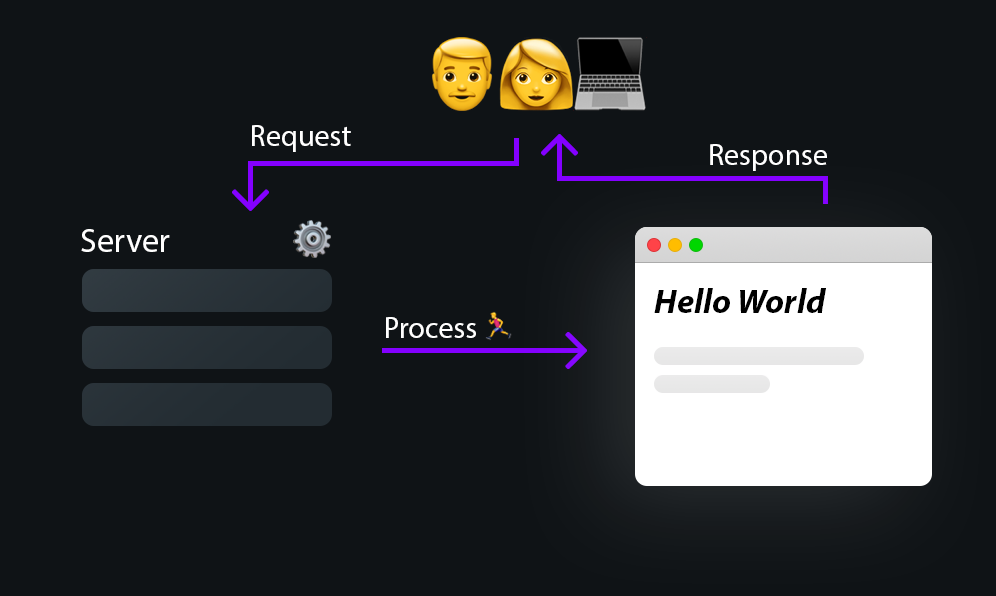

Caching with Redis can turn your SSR website from a slow clunky behemoth, into a blazingly fast, responsive application. When we render things on server side, we ultimately do a lot of packaging, but the end product is the same - one full HTML page, delivered to the user:

The faster we can package and process the response, the faster the experience is going to be for the user. If you have a page that has a high process load, meaning that a lot of processing is required to create the final, served page, you have two real options:

- Start removing processes and optimise your code. This can be a drawn out process, but will result in faster processing on the server side.

- Use Redis, so the web page is only ever processed in the background, and a cached version is always displayed to the user.

In all honesty, you should probably be doing both - but Redis provides the quickest way to optimise.

Adding Redis to your Express Routes

First things first, we need to install Redis. If you don't have Redis installed on your server or computer. You can find out how to instal Redis here.

Next, install it in your Node.JS project by running the following command:

npm i redis

Now that we're up and running, we can start to change our Express Routes. Remember our route from before looked like this?

import express from 'express';

const articleRouter = express.Router();

// Get Singular Article

articleRouter.get(['/article/:articleName/', async function(req, res, next) {

// Process article

// A variable to store all of our processed HTML

let finalHtml = '';

// A lot more Javascript goes here

// ...

// Finally, send the Html file to the user

res.send(finalHtml);

});

Let's add in Redis:

import express from 'express';

import { createClient } from 'redis';

const articleRouter = express.Router();

// Get Singular Article

articleRouter.get(['/article/:articleName/', async function(req, res, next) {

// Connect to Redis

const client = createClient();

client.on('error', (err) => console.log('Redis Client Error', err));

await client.connect();

const articleCache = await client.get(req.originalUrl);

const articleExpire = await client.get(`${req.originalUrl}-expire`);

// We use redis to cache all articles to speed up content delivery to user

// Parsed documents are stored in redis, and sent to the user immediately

// if they exist

if(articleCache !== null) {

res.send(articleCache);

}

if(articleCache == null && articleExpire == null || articleExpire < new Date().getTime()) {

// A variable to store all of our processed HTML

let finalHtml = '';

// A lot more Javascript goes here

// ...

// Finally, send the Html file to the user

if(articleCache == null) {

res.send(mainFile);

}

// We update every 10 seconds.. so content always remains roughly in sync.

// So this not only increases speed to user, but also decreases server load

await client.set(req.originalUrl, mainFile);

await client.set(`${req.originalUrl}-expire`, new Date().getTime() + (10 * 1000));

}

});

The changes we've made here aren't too complicated. In our code, we only need to set two Redis keys - one which will store the cached HTML content of the page, and another to store an expiry date, so we can ensure the content is consistently up to date.

Code Summary

Let's dive into the code in a little more detail:

- First, import Redis, so that it's available to use via

createClient. - Whenever a user goes to our article endpoint, instead of jumping right into parsing and displaying an article, we load up Redis.

- We check for two keys in our Redis database (

await client.get('key-name')). One key isreq.currentUrl. For example this page might be/article/redis-caching-ssr-site-nodejs. The other is an expiry, which is stored in`${req.currentUrl}-expire, i.e./article/redis-caching-ssr-site-nodejs-expire - If a cached version of our article exists, we immediately send it to the user - leading to lightning fast page loads.

- If this is the first time anyone has visited this page, or if the expire key has expired, then we have to parse the article the long way.

- You might have thought that means every 10 seconds the page has to be loaded the long way - but not true, users will always be sent the cached version if it exists, but we'll update the cache every 10 seconds so that the latest content is available. This update has no impact on user load times.

- Therefore the only time a slow load will occur, is if this page has never been accessed before.

Since we are using the URL to store the data, we can be certain that unique routes will have unique data stored against them in our Redis database. Ultimately, this led to an improvement in time to first byte (TTFB) of 95% for my website, as shown in the image at the top.

Caveats and Conclusion

Since my route was becoming overly complex, the time saving here was very large indeed. If your route is not super complex, your time saving may be less. However, saying that, you are still likely to get quite a significant speed boost using this method.

This example proves how a simple change can have a massive performance impact on any SSR site, and is a great use case for Redis, showing how powerful it can be. I hope you've found this useful, and find a way to use it on your own sites and applications.

More Tips and Tricks for Redis

- How to Install Redis

- How to delete all keys and everything in Redis

- A Complete Guide to Redis Hashes

- Make your SSR site Lightning Fast with Redis Cache

- How to Create and Manipulate a List in Redis

- How to create key value pairs with Redis

- How to get all keys in Redis

- Getting Started with Redis and Node.JS